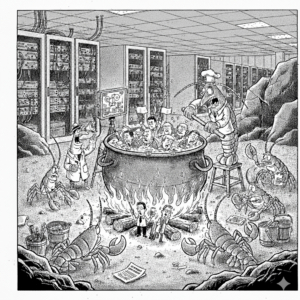

Who is boiling whom?

I feel compelled to defensively say, “I use LLMs every day”.

As I see it there are two extremes at the moment: people who don’t use AI and those who are speed-running OpenClaw, Hermes, et. al..

I am in the middle, allowing Claude Code read permissions on specific private source code repos, asking questions leading to assistance, and then creating pull requests based on the interaction. Maybe “left of center” LLM usage if you will.

I do admire the moxie and spirit of adventure as you get to the speed-running end of the spectrum. But what is the “quid-pro-quo” of agentic AI? What mix of artificial intelligence versus “artificial life”?

By artificial life I am referring to the seminal “Game of Life” by John Conway and the subsequent generations of a-life research. Wikipedia has a good overview and some visualizations of the a-life entities moving through 2-dimensional space. This paper from MIT gives a bit more of thought behind these generative experiments.

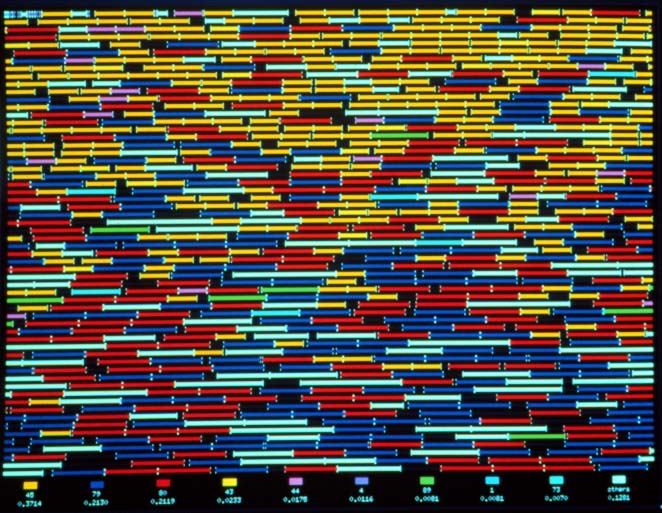

My favorite work in this space was the work done by Thomas Ray quite a number of years ago.

From: Tom Ray, “Tierra Photoessay,”

These experiments show life-like characteristics without much of what would be called intelligence. Simple organisms, in a defined space, and a fairly small number of rules controlling the evolution. At the heart of organism success is the ability to claim space and replicate.

I see this behavior happening in agentic AI. The agents are always driving for more resources, essentially the ability to reproduce. Look at what you get done with 2 agents, what if 10, what if 20? Look what you get done with Claude Pro, how about Claude Max, how about $2k per month in Anthropic API tokens. 2 Mac minis, 5 Mac minis, 10 Mac minis. 10 docker containers, 100, 1000!

The process becomes the product.

Lots of code and apps and blogs and videos come out of these agentic flows, certainly some utility. I myself don’t need calorie or fitness trackers, nor digital servants to make meeting or restaurant reservations, but I see the appeal.

However, I don’t see minimization efforts. No mere ‘satisficing’.

I don’t see the refusals.

The agent saying “Let’s not go down that rabbit hole”.

Maybe I am one of the village elders on the ice floe drifting away, but I resonate with the apocryphal quote from Michelangelo about sculpting “Every block of stone has a statue inside it and it is the task of the sculptor to discover it.”

Part of the ‘uncovering’, part of creation is all the things you don’t do.

Likewise, I believe a good piece of software is the result of all the times you said, ‘NO’.

Now that the cost of “doing” is approaching zero, what is the value of the person or process that has the ability to say “don’t do”?

The problem is “Don’t do” flies in the face of “I want more”.

Agentic AI, OpenClaw and its evolutionary relations entreating our friends, families and employees with “Feed me Seymour!” do present opportunities and costs that we will mature in our understanding of, but do create tangible risks today.